Your new post is loading...

Your new post is loading...

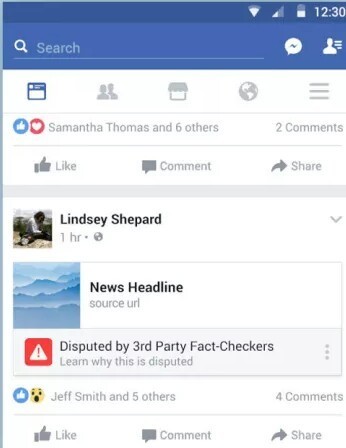

BuzzFeed News obtained an email sent by a Facebook executive to its fact-checking partners that for the first time shared internal data about the program. A news story that's been labeled false by Facebook's third-party fact-checking partners sees its future impressions on the platform drop by 80%, according to new data contained in an email sent by a Facebook executive and obtained by BuzzFeed News. The message also said it typically takes "over three days" for the label to be applied to a false story, and that Facebook wants to work with its partners to speed the process....

Social media analyst Jonathan Albright got a call from Facebook the day after he published research last week showing that the reach of the Russian disinformation campaign was almost certainly largerthan the company had disclosed. While the company had said 10 million people read Russian-bought ads, Albright had data suggesting that the audience was at least double that — and maybe much more — if ordinary free Facebook posts were measured as well. Albright welcomed the chat with three company officials. But he was not pleased to discover that they had done more than talk about their concerns regarding his research. They also had scrubbed from the Internet nearly everything — thousands of Facebook posts and the related data — that had made the work possible. Never again would he or any other researcher be able to run the kind of analysis he had done just days earlier. “This is public interest data,” Albright said Wednesday, expressing frustration that such a rich trove of information had disappeared — or at least moved somewhere the public can’t see it. “This data allowed us to at least reconstruct some of the pieces of the puzzle. Not everything, but it allowed us to make sense of some of this thing.”...

Two weeks ago, development studies journal Third World Quarterly published an article that, by many common metrics used in academia today, will be the most successful in its 38-year history. The paper, in a few days, achieved a higher Altmetric Attention Score than any other TWQ paper. By the rules of modern academia, this is a triumph. The problem is, the paper is not.

Academic articles are now evaluated according to essentially the same metrics as Buzzfeed posts and Instagram selfies.

The article, “The case for colonialism,” is a travesty, the academic equivalent of a Trump tweet, clickbait with footnotes. Its author, Bruce Gilley, a professor at the Department of Political Science at Portland State University, sets out to question the “orthodoxy” of the last 100 years that has given colonialism a bad name.

He argues that western colonialism was “as a general rule, both objectively beneficial and subjectively legitimate,” and goes on to say that instead of taking a critical view of colonial and imperial history, we should be “recolonising some areas” and “creating new Western colonies from scratch”.

So how did this article rise to such prominence and apparent success? Arguments for colonialism have been made in academia before; however, Gilley’s article contributes no new evidence or datasets, and discussing its empirical shortfalls and blindness to vast sections of colonial history would go far beyond the scope of this post.

With the rise of Internet sales and the eventual explosion of online reviews, a strong correlation has been formed between online restaurant ratings and business revenue. For many restaurateurs, favorable reviews translate to better revenue and a stronger bottom line. However, the seedy side of the Web has also led to fake reviews of everything from bullion to bison. Now comes word that researchers at the University of Chicago have successfully taught a neural network to write realistic fake restaurant reviews. The idea was to see if a machine could learn to create malicious negative (or glowing) reviews to either kill or inflate online ratings. The results were startling. Humans were unable to differentiate between the machine generated and human-generated reviews. With these new-age "ghost writers" now pushing commerce, one has to wonder if good taste is a thing of the past. Literally. Cartoon by Ed Hall.

Here’s what we know, so far, about Facebook’s recent disclosure that a shadowy Russian firm with ties to the Kremlin created thousands of ads on the social media platform that ran before, during and after the 2016 presidential election: The ads “appeared to focus on amplifying divisive social and political messages across the ideological spectrum,” including race, immigration and gun rights, Facebook said. The users who purchased the ads were fakes. Attached to assumed identities, their pages were allegedly created by digital guerrilla marketers from Russia hawking information meant to disrupt the American electo

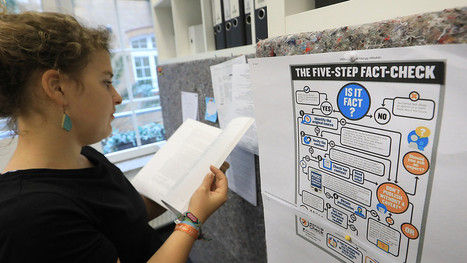

MADRID — “Nos encanta la verdad.” We love the truth. Political fact-checking has existed in the United States for many years. FactCheck.org was established in 2003, and The Washington Post Fact Checker and PolitiFact were launched in 2007. In recent years, this movement representing a new form of accountability journalism has exploded around the globe. Now, there are 126 fact-checking organizations in 49 countries. Clearly, voters in many countries care about and want to know the truth. About 190 fact-checkers from 54 countries attended the fourth annual Global Fact-Checking Summit, July 5-7, 2017. The International Fact-Checking Network at Poynter Institute hosted the summit. The first meeting of fact-checkers from around the world took place in 2014, with 50 fact-checkers. Now the community has grown so much that we needed a “speed meeting” session for introductions....

I’m here to preach the gospel of quality news, and to talk about how combating fake news and hate news can not only be good journalism, but… good business.” Thus, Daily Beast editor-in-chief and managing director John Avlon opened MediaPost's Publishing Insider Summit, with a keynote addressing the hot-button issue that has come to symbolize America’s dysfunction and threaten its democracy — while mapping a way forward. Avlon began by acknowledging the decline of trust in media, pointing to a number of trends, including the rise of partisan media, which was an “attempt to balance implicit bias on the part of mainstream media with explicit bias,” as well as the fragmentation of the broader media environment....

Much like it did before the elections in France Sunday, Facebook is taking steps to curb fake news and delete fake accounts prior to the upcoming elections in the U.K. The social network announced in a Facebook Security note last month that it took action against some 30,000 fake accounts in France, and it ran full-page ads in several newspapers in that country—including Le Monde, Les Échos, Libération, Le Parisien and 20 Minutes—containing tips on how users can spot fake news, similar to the information it began sharing atop its News Feed earlier in April. Facebook took similar steps in the U.K., with BBC reporting that ads ran in newspapers including The Times, The Guardian and the Daily Telegraph providing 10 red flags for users of the social network to watch out for in determining whether posts are real or fake....

Good things can happen when a crowd goes to work on trying to figure out a problem in journalism. At the same time, completely crowdsourced news investigations can go bad without oversight — as when, for example, a group of Redditors falsely accused someone of being the Boston Marathon bomber. An entirely crowdsourced investigation with nobody to oversee it or pay for it will probably go nowhere. At the same time, trust in the media is low and fact-checking efforts have become entwined with partisan politics. So what would happen if you combined professional journalism with fact checking by the people? On Monday evening, Wikipedia founder Jimmy Wales launched Wikitribune, an independent site (not affiliated with Wikipedia or the Wikimedia Foundation) “that brings journalists and a community

of volunteers together” in a combination that Wales hopes will combat fake news online — initially in English, then in other languages....

Google search is developing a credibility problem that could be blamed on artificial intelligence. In a statement, the company said its algorithms for generating "featured snippet" answers can cause "instances when we feature a site with inappropriate or misleading content," resulting in false answers. However, Google is also the source--and funding--behind much of the fake news on the Internet, thanks to a similar lack of curation for YouTube....

When Americans encounter news on social media, how much they trust the content is determined less by who creates the news than by who shares it, according to a new experimental study from the Media Insight Project, a collaboration between the American Press Institute and The Associated Press-NORC Center for Public Affairs Research. Whether readers trust the sharer, indeed, matters more than who produces the article —or even whether the article is produced by a real news organization or a fictional one, the study finds. Who shares an article on social media influences whether people trust it, research shows As social platforms such as Facebook or Twitter become major thoroughfares for news, the news organization that does the original reporting still matters. But the study demonstrates that who shares an article on a social media site like Facebook has an even bigger influence on whether people trust what they see....

The French advertising powerhouse and the British government want more control over where their ads run. Havas is pulling all spending from Google and YouTube in the United Kingdom, citing the desire to have more control of its inventory in hopes of keeping brands away from inappropriate or offensive content.According to a report in The Guardian, the French advertising giant’s decision came after talks broke down related to Google’s inability to “provide specific reassurances” related to where video and display ads appear. The report cites content showing up in YouTube alongside videos of white nationalists and terrorists. The news about Havas—which spends around 175 million euros ($188.2 million) annually on digital advertising clients in the U.K.—comes alongside a report that the British government and other organizations also pulled their ads from the tech giant....

When Twitter user Rory Cellan-Jones asked Google Home if Barack Obama is planning a coup, the digital assistant device responded by detailing a bogus conspiracy theory about the former president plotting a communist scheme to take over the government.

"According to details exposed in Western Centre for Journalism's exclusive video, not only could Obama be in bed with the communist Chinese, but Obama may in fact be planning a communist coup d'état at the end of his term in 2016," the smart home device's robotic voice explained, though it stumbled over the word "d'etat."

But this outlandish response isn't restricted to Google Home. Rather, it highlights a problem with how the search engine responds to queries in the form of "featured snippets" — short, direct answers highlighted at the top of its search results....

|

Russian efforts to meddle in US politics didn't end at Facebook and Twitter. The tentacles of one campaign extended to YouTube, Tumblr and even Pokémon Go! One Russian-linked campaign posing as part of the Black Lives Matter movement used Facebook, Instagram, Twitter, YouTube, Tumblr and Pokémon Go and even contacted some reporters in an effort to exploit racial tensions and sow discord among Americans, CNN has learned. The campaign, titled "Don't Shoot Us," offers new insights into how Russian agents created a broad online ecosystem where divisive political messages were reinforced across multiple platforms, amplifying a campaign that appears to have been run from one source -- the shadowy, Kremlin-linked troll farm known as the Internet Research Agency. A source familiar with the matter confirmed to CNN that the Don't Shoot Us Facebook page was one of the 470 accounts taken down after the company determined they were linked to the IRA. CNN has separately established the links between the Facebook page and the other Don't Shoot Us accounts....

We may already be living in a truthless dystopia.

It’s no secret that the professional media is in crisis. But what if the situation is even worse than those of us in the industry thought?

What if vast swaths of the public no longer believe the news on controversial political stories, even when it comes from established media outlets?

What if the public ascribes no value to professional news organizations?

That situation may sound terrifying to journalists and media owners, but we may be heading there quickly.

Researchers at Yale University have found that 40% of the public are now willing to dismiss perfectly accurate stories, regardless of the source. What may be even more disturbing is that articles sourced to a top news brand are perceived to have no more credibility than articles sourced to a joke brand, or none at all.

Maggie Haberman started working at the New York Times in 2015 — with just one little problem. She didn’t know what she was supposed to be doing there.“ I was looking for a lane, so I picked up Trump because nobody seemed very interested in Trump,” Haberman said on the latest episode of Recode Decode, hosted by Kara Swisher. “I knew him and I knew his people.” Just two years later, she’s one of the NYT’s best-known reporters thanks to her coverage of Trump’s campaign and, now, his presidency. But for most of her career, Haberman had dreamed of one day being the Times’ chief New York correspondent, covering the city’s “broken” political system. Speaking with Swisher at the 2017 Texas Tribune Festival, along with Washington Post reporter David Fahrenthold, Haberman said she learned “the number one rule” about Donald Trump while working at the New York Post, which he would call frequently as a “source” of gossip about himself. “In his brain, two things are true,” she said. “No one speaks for him except him, even if he actually has a spokesman, and he believes that facts can be changed so that they can be something other than what you thought they were a day ago.”

There's a whole industry dedicated to producing fake US news in Macedonia – and it's getting ready for 2020. Veles used to make porcelain for the whole of Yugoslavia. Now it makes fake news. This sleepy riverside town in Macedonia is home to dozens of website operators who churn out bogus stories designed to attract the attention of Americans. Each click adds cash to their bank accounts. The scale is industrial: Over 100 websites were tracked here during the final weeks of the 2016 U.S. election campaign, producing fake news that mostly favored Republican candidate for President Donald Trump....

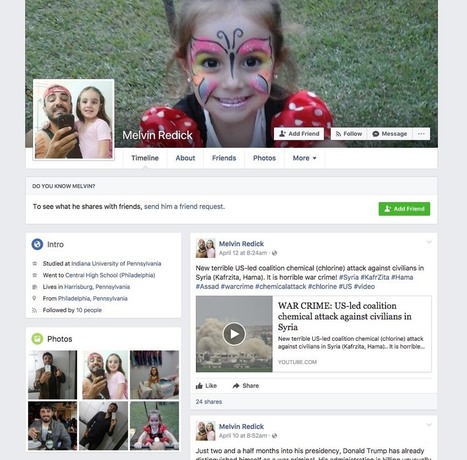

Sometimes an international offensive begins with a few shots that draw little notice. So it was last year when Melvin Redick of Harrisburg, Pa., a friendly-looking American with a backward baseball cap and a young daughter, posted on Facebook a link to a brand-new website.

“These guys show hidden truth about Hillary Clinton, George Soros and other leaders of the US,” he wrote on June 8, 2016. “Visit #DCLeaks website. It’s really interesting!”

Mr. Redick turned out to be a remarkably elusive character. No Melvin Redick appears in Pennsylvania records, and his photos seem to be borrowed from an unsuspecting Brazilian. But this fictional concoction has earned a small spot in history: The Redick posts that morning were among the first public signs of an unprecedented foreign intervention in American democracy.

A report on Alex Jones’ InfoWars claiming child sex slaves have been kidnapped and shipped to Mars is untrue, NASA told The Daily Beast on Thursday. “There are no humans on Mars. There are active rovers on Mars. There was a rumor going around last week that there weren’t. There are,” Guy Webster, a spokesperson for Mars exploration at NASA, told The Daily Beast. “But there are no humans.” On Thursday’s program, the InfoWars host welcomed guest Robert David Steele onto The Alex Jones Show, which airs on 118 radio stations nationwide, to talk about kidnapped children he said have been sent on a two-decade mission to space....

Imagine opening your morning newspaper (itself a novelty these days) and finding a story about, not just life, but entire civilizations on another planet, attributed to one of the world’s foremost astronomers. Would you believe it, or might you suspect that some “alternative facts” had found their way to your doorstep? Back in 1835, many readers in New York ended up believing just such a tale. The New York Sun, then one of the city’s leading newspapers, printed an elaborate six-part series about exotic animals living on the moon (including human-like creatures with wings), purportedly discovered through a gigantic newfangled telescope. The source of the information was Sir John Herschel, who was an actual real-life astronomer but had nothing whatsoever to do with the Sun’s scoop. Rough image of lithograph of “ruby amphitheater” described in the New York Sun newspaper in August 1835. Public domain image.Somebody at the Sun (just who remains something of a mystery) made the whole thing up, in an effort to goose its circulation. The hoax did eventually unravel, although the newspaper never retracted the story. Today, of course, we are battling similarly fake news, found not only in dark corners of the Internet but in mainstream venues such as Facebook. Yet, even in our “post-truth” world, it is still virtually unthinkable that a major newspaper in a major U.S. city would publish information that it knew to be demonstrably false....

Apparently fake news is a thing in France, too. And apparently it was rampant during the run up to the just concluded presidential election. Emmanuel Macron won by a large margin but it seems there was a lot semi-truths being thrown about. To help combat the spate of fake news surrounding the election, J. Walter Thompson Paris worked with French news organization Liberation to create CheckNews.fr , a search engine staffed by actual human journalists for three days leading up to the election. These journalists answered any search query made with links to multiple sources providing truthful answers to each query....

Google is demoting misleading and offensive content in its search by updating algorithms and offering users new ways to report bad results. The change follows increased attention to flaws in top search results, including the promotion of fake news — and deliberately misleading or false information formatted to look like news — during the 2016 presidential election. Google said it has updated its algorithms to better prioritize “authoritative” content. Content may be deemed authoritative based on signals such as affiliation of a site with a university or verified news source, how often other sites link to the site in question and the quality of the sites that link. “We’ve adjusted our signals to help surface more authoritative pages and demote low-quality content, so that issues similar to the Holocaust denial results that we saw back in December are less likely to appear,” writes Ben Gomes, Google’s executive in charge of search, in a blog post published today....

Following the London terror attack, Jessica McGreal asks: Does social media bring people together or drive them further apart following global acts of terror? As news of London’s terrorist attack unfolded on Wednesday afternoon, it wasn’t the BBC, Sky or any other news network people turned to, but Twitter and Facebook. The news, first reported by eyewitnesses on Twitter, unraveled in real-time in front of the eyes of millions glued to their computer or smartphone screens following the hashtags #Westminster and #Parliament. The storm of updates progressed from on the ground information – sometimes misinformation – to graphic images of those injured, then moved onto blame shaming around race and religion. Donald Trump Junior’s out-of-context tweet about London’s Muslim Mayor Sadiq Khan perhaps best sums up the extent of fake news that emerged....

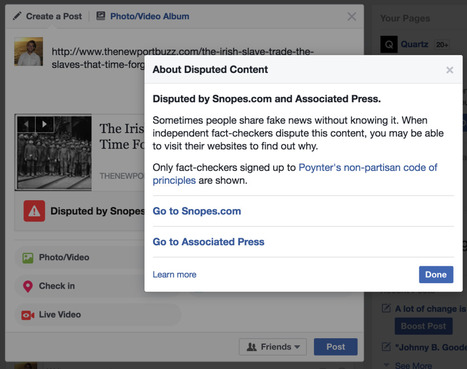

Facebook has started deploying new weapons in its battle against fake news. The election of Donald Trump to the presidency of the United States has led to a lot of soul-searching, not least of which by the world’s largest social network. Facebook has been repeatedly accused of facilitating and magnifying an ecosystem of websites that spread false information and conspiracy theories across the platform. That criticism led Facebook to announce late last year that it would be collaborating with “third-party fact checking organizations” to identify stories that don’t hold up to scrutiny, and warn users when they try to post these stories. The new feature appears to be picking up steam. The Facebook fact-checker has begun flagging a story that was shared widely on the lead-up to St. Patrick’s Day (March 17) that falsely claims thousands of Irish people were brought to the United States as slaves. This is what happens when you try to share the story on Facebook...

EThe inventor of the world wide web, Sir Tim Berners-Lee, has unveiled a plan to tackle data abuse and fake news. In an open letter to mark the web's 28th anniversary, Sir Tim has set out a five-year strategy amid concerns he has about how the web is being used. Sir Tim said he wants to start to combat the misuse of personal data, which creates a "chilling effect on free speech". He also called for tighter regulation of "unethical" political adverts....

|

Your new post is loading...

Your new post is loading...

Do you think Facebook's "Fake News" label is working?